How I built my Kubernetes Homelab – Part 4

After my Kubernetes cluster is up and running I want to do the next step. As a former storage guy I need storage. The running applications as well. Without the option to save and snapshot data to persistent storage all I do inside of a pod is gone when it will be redeployed and this maybe happens quite often.

As I mentioned before I have here a little Homelab running on vSphere 6.7 Update 3, which can act as Cloud Native Storage (CNS). CNS is a features which makes Kubernetes aware of how it can provision storage on-demand on vSphere. This in a fully automated and scalable fashion.

Installing the vSphere Cloud Provider Interface (CPI)

First of all we need to create a secret, where we store user credentials for vCenter access. In my lab I used my existing Administrator account. Maybe you want to build it more secure, then you can use this VMware Documentation to create a role for a user with only necessary rights.

marco@lab-kube-m1:~$ cat cpi-global-secret.yaml apiVersion: v1 kind: Secret metadata: name: cpi-global-secret namespace: kube-system stringData: lab-vc01.homelab.horstmann.in.username: "administrator@vsphere.local" lab-vc01.homelab.horstmann.in.password: "XXXXXXXX" marco@lab-kube-m1:~/yaml/vcenter$ kubectl create -f cpi-global-secret.yaml secret/cpi-engineering-secret created

We can verify that this credentials secret is successfully created in the kube-system namespace with this command.

marco@lab-kube-m1:~$ kubectl get secret cpi-global-secret --namespace=kube-system NAME TYPE DATA AGE cpi-global-secret Opaque 2 3m

The next step is that we create a configmap for vSphere. These vsphere.conf we create in this step can stored anywhere (exept /etc/kubernetes/) . This configmap will provide informations to the CPI components to access the vCenter.

marco@lab-kube-m1:~/# cat vsphere.conf

# Global properties in this section will be used for all specified vCenters unless overriden in VirtualCenter section.

global:

port: 443

# set insecureFlag to true if the vCenter uses a self-signed cert

insecureFlag: true

# settings for using k8s secret

secretName: cpi-global-secret

secretNamespace: kube-system

# vcenter section

vcenter:

tenant-homelab:

server: lab-vc01.homelab.horstmann.in

datacenters:

- Homelab

marco@lab-kube-m1:~$ kubectl create configmap cloud-config --from-file=vsphere.conf --namespace=kube-system

Before we installing the vSphere Cloud Controller Manager we need to make sure that all nodes are tainted with “node.cloudprovider.kubernetes.io/uninitialized=true:NoSchedule”. When configuring the cluster with an external cloud provider this marks the nodes as unusable. The cloud provider how initialzes this node will remove the taint to make the worker node usable.

marco@lab-kube-m1:~$ kubectl taint node lab-kube-n1 node.cloudprovider.kubernetes.io/uninitialized=true:NoSchedule marco@lab-kube-m1:~$ kubectl taint node lab-kube-n2 node.cloudprovider.kubernetes.io/uninitialized=true:NoSchedule # We can check if the the taint is set. marco@lab-kube-m1:~$ kubectl describe nodes | egrep "Taints:|Name:" Name: lab-kube-m1 Taints: node-role.kubernetes.io/master:NoSchedule Name: lab-kube-n1 Taints: node.cloudprovider.kubernetes.io/uninitialized=true:NoSchedule Name: lab-kube-n2 Taints: node.cloudprovider.kubernetes.io/uninitialized=true:NoSchedule

To install the vSphere Cloud Provider, we have to deploy 3 manifests. This three manifests will create the RBAC roles and bindings as well as it deploys the Cloud Controller Manager in a DaemonSet.

marco@lab-kube-m1:~$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/cloud-provider-vsphere/master/manifests/controller-manager/cloud-controller-manager-roles.yaml clusterrole.rbac.authorization.k8s.io/system:cloud-controller-manager created marco@lab-kube-m1:~$ kubectl apply -f https://raw.githubusercontent.com/kubernetes/cloud-provider-vsphere/master/manifests/controller-manager/cloud-controller-manager-role-bindings.yaml clusterrolebinding.rbac.authorization.k8s.io/system:cloud-controller-manager created marco@lab-kube-m1:~$ kubectl apply -f https://github.com/kubernetes/cloud-provider-vsphere/raw/master/manifests/controller-manager/vsphere-cloud-controller-manager-ds.yaml serviceaccount/cloud-controller-manager created daemonset.extensions/vsphere-cloud-controller-manager created service/vsphere-cloud-controller-manager created

To check if the Cloud Provider Manager was sucessfully deployed you can use this command to check if the pod is up and running.

marco@lab-kube-m1:~$ kubectl get pods --namespace=kube-system NAME READY STATUS RESTARTS AGE [...] vsphere-cloud-controller-manager-9xc8n 1/1 Running 9 9d

After the vSphere Cloud Controller Manager is up and running the taints for our nodes should be removed and the output of your cluster nodes should look similar.

marco@lab-kube-m1:~$ kubectl describe nodes | egrep "Taints:|Name:" Name: lab-kube-m1 Taints: node-role.kubernetes.io/master:NoSchedule Name: lab-kube-n1 Taints: <none> Name: lab-kube-n2 Taints: <none>

Every node should now have a ProviderID in it’s specs. If this is missing, like in my case because of mistakes in pod networking configuration, you can set the UUIDs manually. Without this information the CSI provider is not working correctly and e.g. cannot attach persistent volumes to our worker nodes. I plan to create for this a separate blog post.

marco@lab-kube-m1:~$ kubectl describe nodes | egrep "Name:|ProviderID:" Name: lab-kube-m1 ProviderID: vsphere://421a73f1-eb70-ae31-8ce0-78a38a0387f2 Name: lab-kube-n1 ProviderID: vsphere://421afd60-e13c-fcc5-b6fb-2f2e6b749c1a Name: lab-kube-n2 ProviderID: vsphere://421aadff-58d3-eeed-89e8-6df01b1c1713

Install vSphere Container Storage Interface Driver

We need to have an additional configuration file to provide access credentials and defintion of the vCenter details. I created a file “/etc/kubernetes/csi-vsphere.conf” and used it to generate a secret in the kube-system namespace.

marco@lab-kube-m1:~/# cat /etc/kubernetes/csi-vsphere.conf [Global] cluster-id = "Homelab" cluster-distribution = "Ubuntu" #ca-file = <ca file path> # optional, use with insecure-flag set to false #thumbprint = "<cert thumbprint>" # optional, use with insecure-flag set to false without providing ca-file [VirtualCenter "lab-vc01.homelab.horstmann.in"] insecure-flag = true user = "Administrator@vsphere.local" password = "XXXXXXXX" port = "443" datacenters = "Homelab"

marco@lab-kube-m1:~$ kubectl create secret generic vsphere-config-secret --from-file=/etc/kubernetes/csi-vsphere.conf --namespace=kube-system

This credentials will be used to deploy the last CSI components. It deploys the RBAC roles for the vSphere CSI Controller, creates an deployment for this vSphere CSI controller and deploys a Daemonset for CSI node, which will run on every node.

marco@lab-kube-m1:~$ https://raw.githubusercontent.com/kubernetes-sigs/vsphere-csi-driver/master/manifests/v2.1.1/vsphere-67u3/vanilla/rbac/vsphere-csi-controller-rbac.yaml marco@lab-kube-m1:~$ kubectl create -f https://raw.githubusercontent.com/kubernetes-sigs/vsphere-csi-driver/master/manifests/v2.1.1/vsphere-67u3/vanilla/deploy/vsphere-csi-controller-deployment.yaml #There is also a CSI node Daemonset to be deployed, that will run on every node. marco@lab-kube-m1:~$ kubectl create -f https://raw.githubusercontent.com/kubernetes-sigs/vsphere-csi-driver/master/manifests/v2.1.1/vsphere-67u3/vanilla/deploy/vsphere-csi-node-ds.yaml

We can check with this command if all pods are up and running.

marco@lab-kube-m1:~$ kubectl get pods --namespace=kube-system NAME READY STATUS RESTARTS AGE [...] vsphere-csi-controller-66c54895c5-rtjfb 5/5 Running 17 6m19h vsphere-csi-node-54fmh 3/3 Running 0 8m vsphere-csi-node-d2s4l 3/3 Running 0 8m vsphere-csi-node-ddrtq 3/3 Running 4 8m

Now everything is created to connect and manage persistent volumes on vSphere. The only missing part is now to create a default storage class for deploying persistent volumes.

Create a “default” Storage Class

When we want to deploy persistent volumes from vSphere we need to create a storage class. But why do we need a storage class? A Storage Class allows us to deploy applications to a space with specific parameters. A classic example could be to have two storage classes called “ssd” and “hdd” and allow application owners to deploy their application to a storage class with high or low IO capacity.

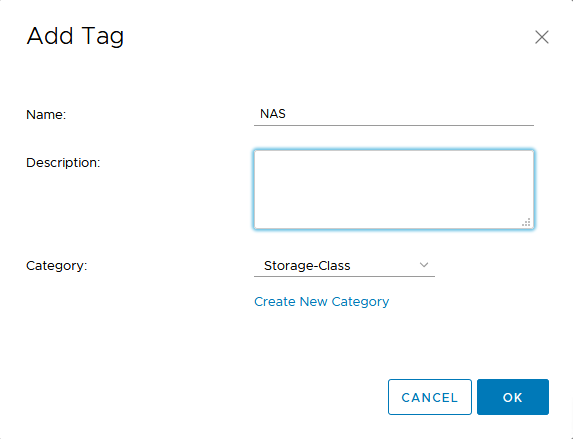

To create an own storage class we need to create a VMware Tag and a VM Storage Policy. We create here a Tag category called “Storage-Class”. This Tag category can only be used on Datastores in my case and only one tag can be applied to each object.

After creating the Tag Category we create one or more Tags in this catagory. In my lab we use an NFS Export of a old QNAP system, which is mounted as a VMware Datastore. Because of this I called my Tag “NAS”.

After creating the Tags, we can create a VM Storage Policy. The name of this VM Storage Policy “NAS Storage” will be later used in a manifest to create the Kubernetes Storage class.

I use in my lab a QNAP NFS export as datastore. Because of this we need to select “Enable tag based placement rules”. If you are using a vSAN-based storage you need to select the other option. You can find here, how to use vSAN to provision storage.

We need here to select the Tag category and “Use storage tagged with”. With Brose Tags we can select the tag “NAS”.

The wizard will now show us if our datastores are compatible with this configuration.

Just a short recap of what we have configured in this VM Storage Policy.

Ok, on VMware’s side everything is configured. How we get this mapped to kubernetes? Again we have to create a manifest file called e.g. nas.yaml. In this manifest we select a name, make this storage class the default, set the storagepolicyname according our vSphere VM Storage Policy and set the filesystem type. Now we can deploy this manifest with kubectl and check if it was created.

#Content of nas.yaml file

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: nas-sc

annotations:

storageclass.kubernetes.io/is-default-class: "true"

provisioner: csi.vsphere.vmware.com

parameters:

storagepolicyname: "NAS Storage"

fstype: ext4

marco@lab-kube-m1:~$ kubectl create -f nas.yaml storageclass.storage.k8s.io/nas-sc created marco@lab-kube-m1:~$ kubectl get storageclass NAME PROVISIONER RECLAIMPOLICY VOLUMEBINDINGMODE ALLOWVOLUMEEXPANSION AGE nas-sc (default) csi.vsphere.vmware.com Delete Immediate false 2s

Everything is now ready to deploy our first own application to our Kubernetes cluster. Please join me in my next part of this series where we will create a demo application based on mysql and phpMyAdmin I want to use for demonstration purposes.